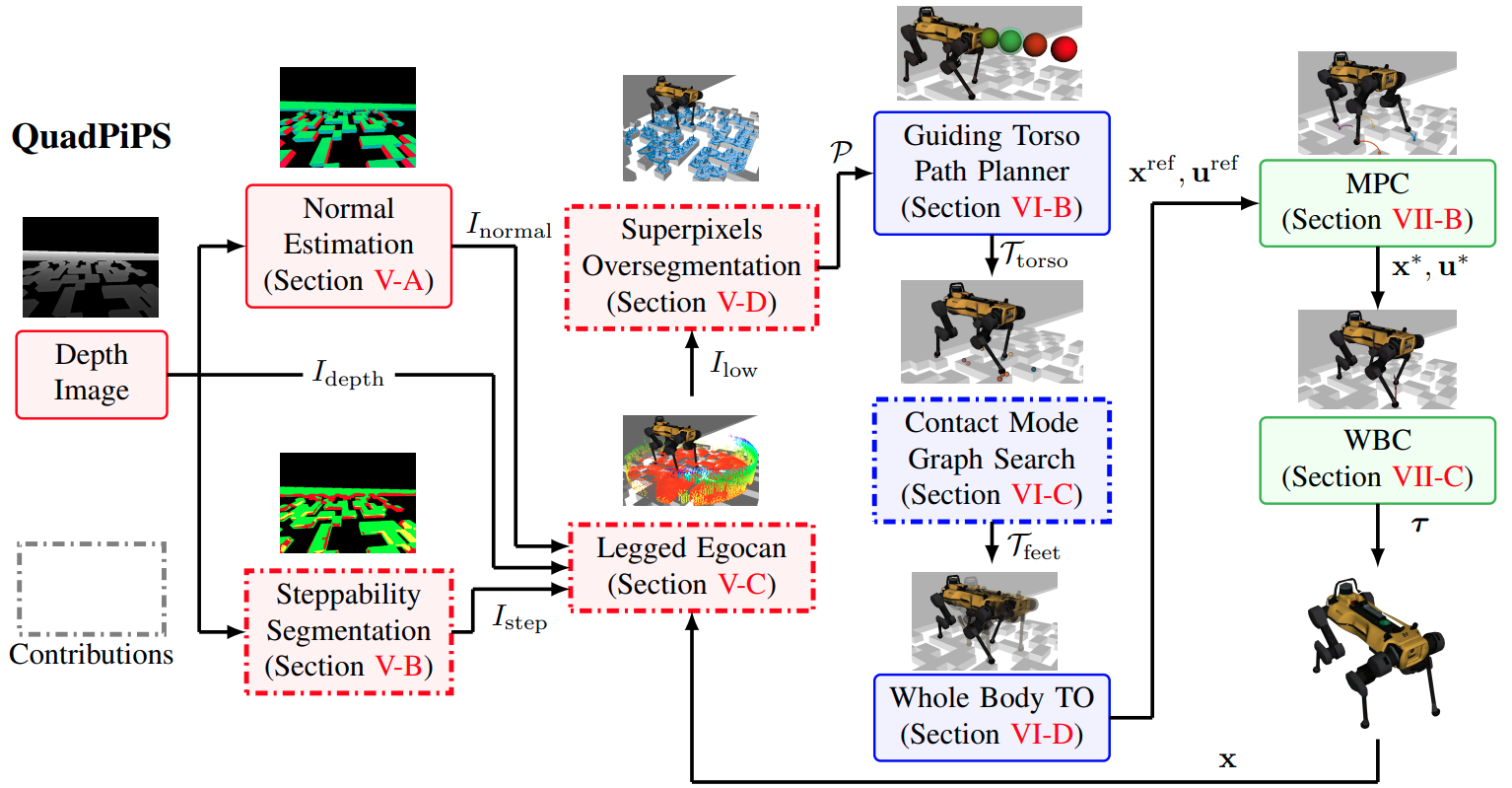

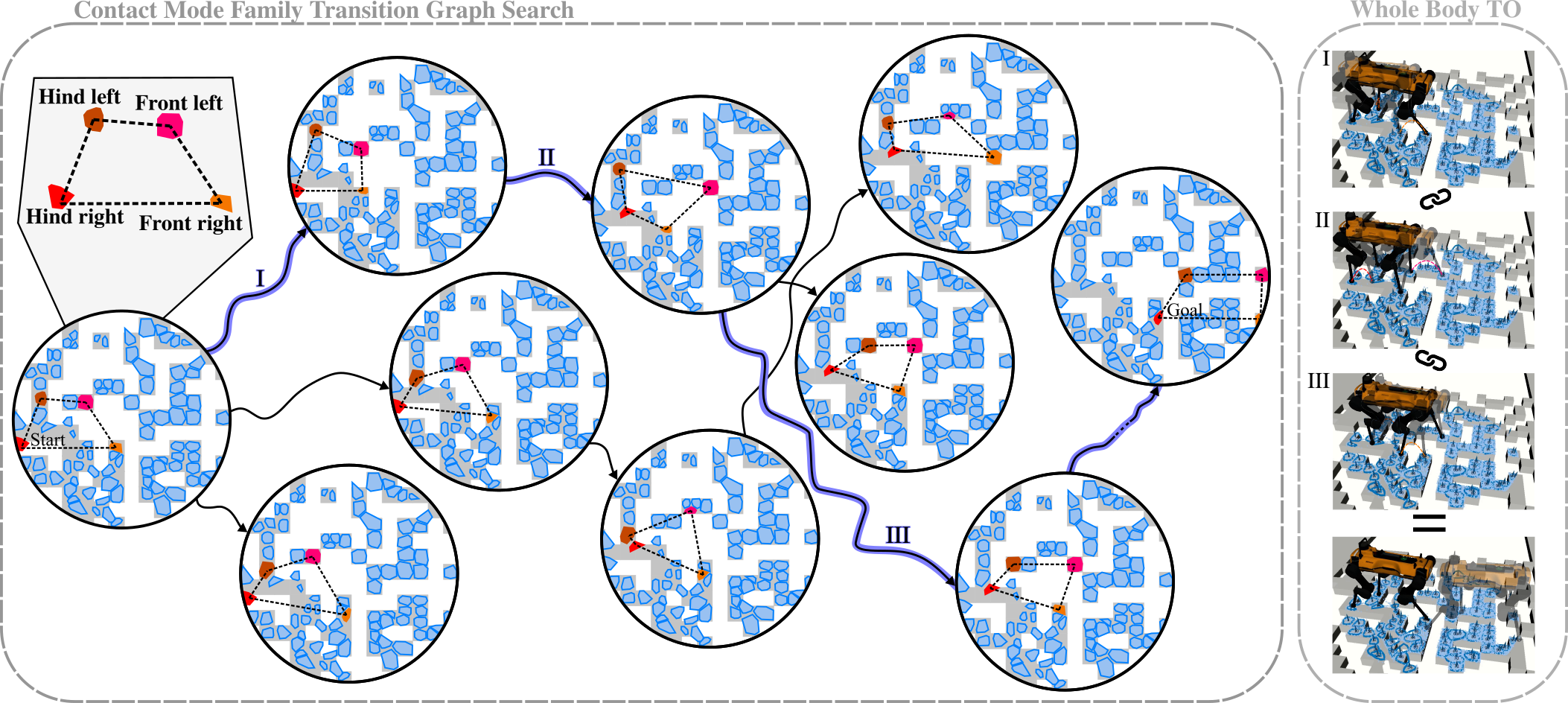

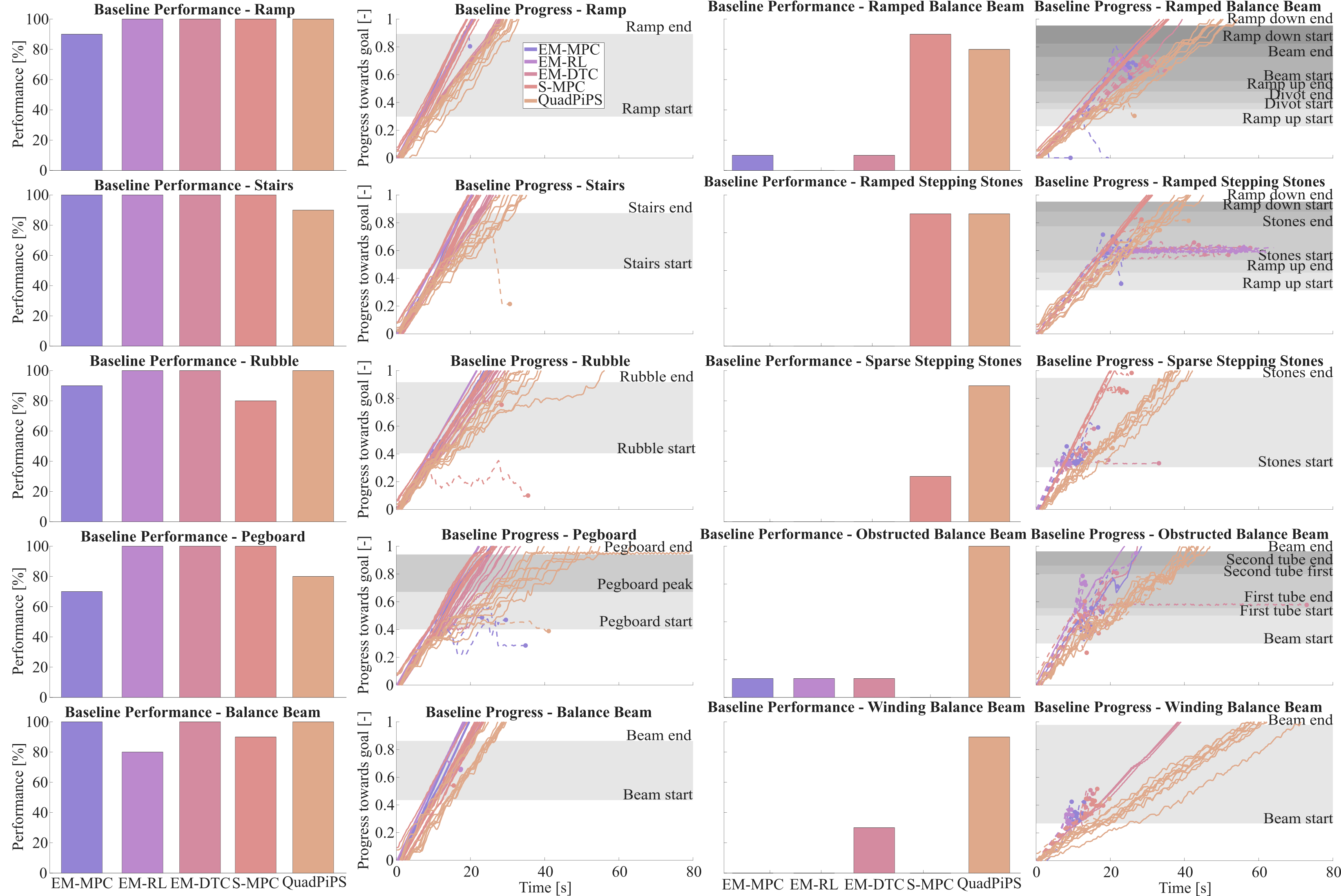

This work proposes QuadPiPS, a perception-informed framework for quadrupedal foothold planning in the perception space. QuadPiPS employs a novel ego-centric local environment representation, known as the legged egocan, that is extended here to capture unique legged affordances through a joint geometric and semantic encoding that supports local motion planning and control for quadrupeds. QuadPiPS takes inspiration from the Augmented Leafs with Experience on Foliations (ALEF) planning framework to partition the foothold planning space into its discrete and continuous subspaces. To facilitate real-world deployment, QuadPiPS broadens the ALEF approach by synthesizing perception-informed, real-time, and kinodynamically-feasible reference trajectories through search and trajectory optimization techniques. To support deliberate and exhaustive searching, QuadPiPS over-segments the egocan floor via superpixels to provide a set of planar regions suitable for candidate footholds. Nonlinear trajectory optimization methods then compute swing trajectories to transition between selected footholds and provide long-horizon whole-body reference motions that are tracked under model predictive control and whole body control. Benchmarking with the ANYmal C quadruped across ten simulation environments and five baselines reveals that QuadPiPS excels in safety-critical settings with limited available footholds. Real-world validation on the Unitree Go2 quadruped equipped with a custom computational suite demonstrates that QuadPiPS enables terrain-aware locomotion on hardware.

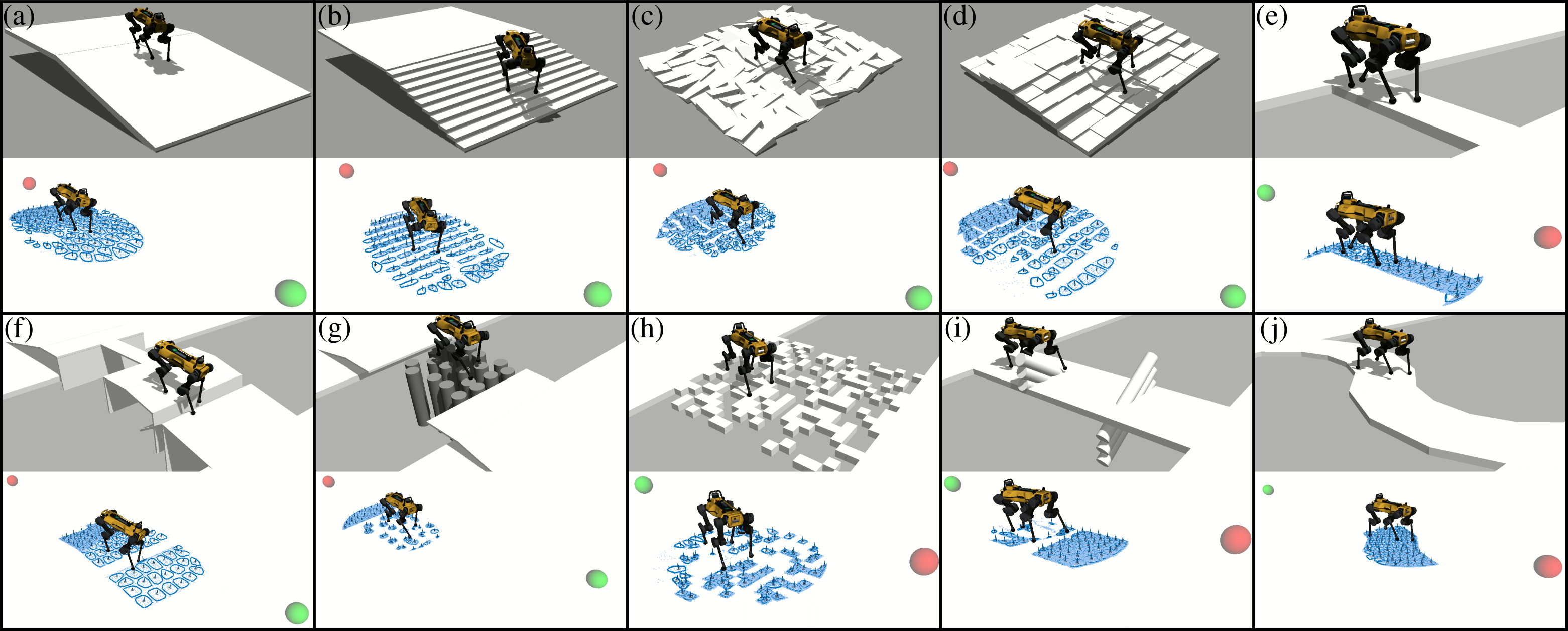

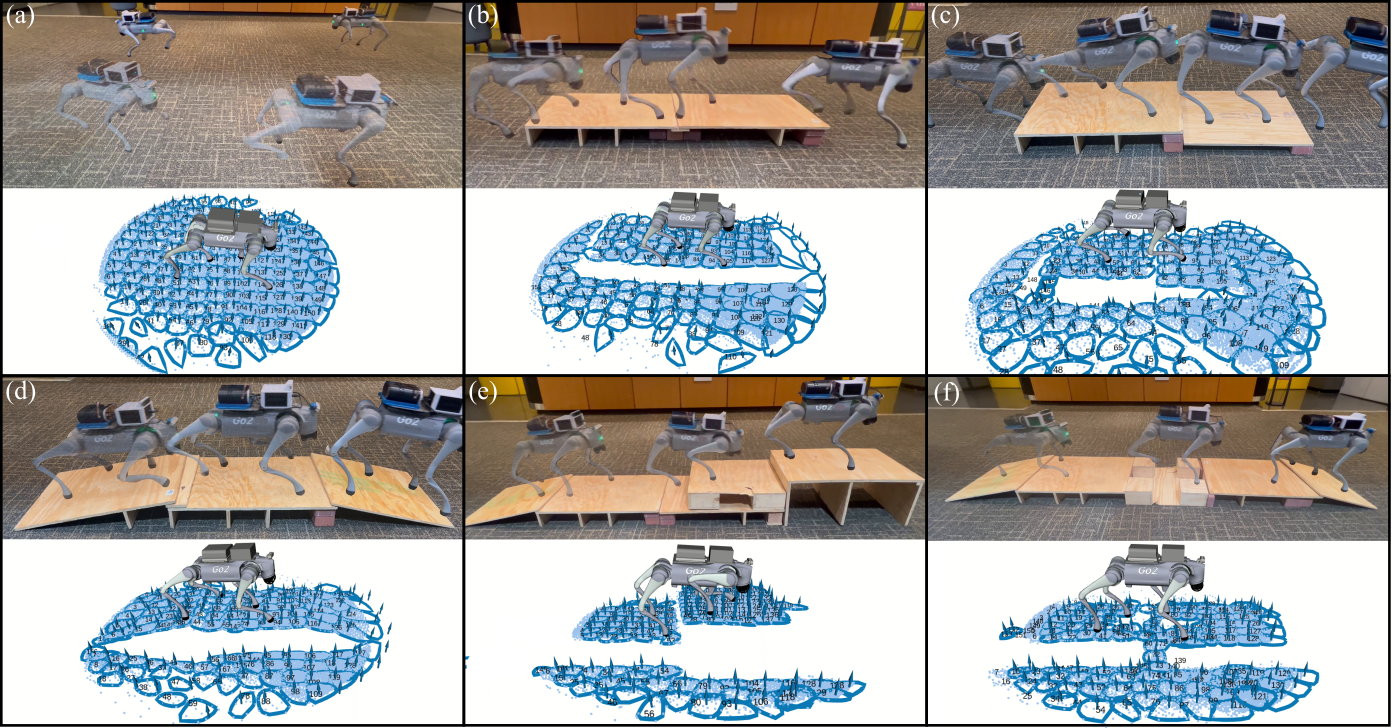

Visualizations of QuadPiPS hardware deployment in both indoor and outdoor settings. (a) Flat, (b) Single Platform, (c) Double platform, (d) Ramped single platform, (e) Triple platform, (f) Ramped stepping stones platform. Top images are the real world and bottom images are RViz. In RViz, superpixel regions are depicted by blue points, normals, and boundaries.